Who with AI-systems encounter answers that seem plausible but are not correct. This is due to the way Language models be trained: They calculate the most likely continuation of a text instead of checking facts. This is exactly where Grounding and hallucination are relevant.

Grounding ensures that a model does not emerge from patterns. speculated, but is based on documented information. Hallucinations occur when this basis is missing or when the model attempts to construct an answer based on missing data. Both mechanisms are crucial for the stable operation of systems - especially in regulated or sensitive areas.

Why grounding is essential

Grounding describes the connection of a model to clear, verifiable data. It separates the process of language generation from the technical accuracy of the content. For an AI to make reliable statements, the origin, structure and currency of the information used must be clearly documented. This mechanism prevents models from reasoning solely on the basis of probabilities.

If there is no grounding, the result is statements that are linguistically convincing but technically wrong can be. This can quickly lead to problems, particularly in medicine, the legal sector or technical applications. Models then deliver results that are formulated consistently but bear no relation to the actual data situation. Grounding creates the necessary stability here.

How hallucinations arise

A hallucination occurs when a model generates content that does not refer to a verifiable source. This can range from invented details to inappropriate conclusions. This is caused by the basic principle of models: they emphasize probable formulations, not on correct content. Even well-trained systems are not exempt from this.

Hallucinations occur especially when inputs are unclear or when the model is supposed to force a response due to missing data. They are not a malfunction, but a systemic property. Therefore, the risk can only be reduced through clear rules, clean data and technical restrictions.

Grounding in practice

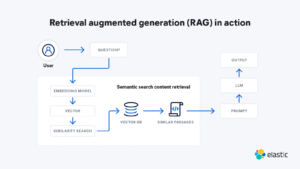

Grounding is relevant wherever specifications are binding and content changes regularly. The importance of a clear database is particularly evident in administrative processes. Modern systems use procedures such as RAG (Retrieval-Augmented Generation). The formulation of the answer is separated from its sources: The model generates the text, but only accesses verified data records, legal bases or guidelines that are provided via a search or database layer.

A practical example: An AI system is to create a draft decision based on a current administrative guideline. Without grounding, the model would select a probable formulation, quoting paragraphs inaccurately or adding passages that do not appear in the current version. With RAG, on the other hand, the system calls up the binding legal source, uses the versions stored there and formulates the text strictly within this framework. This ensures that the draft remains technically correct and legally compliant.

Typical fields of application for grounding

Medical coding and clinical document analysis

Administrative processes with fixed legal bases

Technical documentation and product-relevant data

Compliance processes with binding regulations

Source chart: elastic

Data quality, versioning and organizational measures

Data quality is the basis for functioning grounding. Only clear structured, consistent neat and comprehensible versioned Data prevents AI systems from accessing outdated or contradictory content. This applies equally to technical documents, product information, legal requirements and internal knowledge bases. Data that is out of date or unclear leads directly to incorrect results.

Clear organizational processes are also required. Companies must define responsibilities for data maintenance, test cycles and quality controls. This includes clear rules on how systems should deal with uncertainties, as well as procedures that make conspicuous results visible and keep them correctable. Only when technical and organizational measures work together will an AI system remain reliable. Stable in the long term.

Important points for stable results

Data must be clearly structured, consistently maintained and clearly versioned.

Systems must not force an answer and must report uncertainties.

Responsibilities for data maintenance and quality control must be defined.

Monitoring, feedback and regular checks stabilize ongoing operations.

Frequently asked questions about grounding and hallucination in AI systems

How can you tell if an answer is hallucinated?

An answer is considered hallucinated if it cannot be traced back to a traceable and verifiable source. Systems that output references facilitate this check.

Is grounding enough to rule out hallucinations?

No. Grounding reduces the risk, but language models remain probabilistic. Clear rules, data quality and ongoing controls are needed.

Which data is suitable for grounding?

Clearly structured and maintained databases such as technical documentation, product data, legal specifications or medical regulations are suitable. Consistency and versioning are crucial.

How is grounding implemented technically?

Typical methods are retrieval mechanisms, databases, vector searches or API interfaces. The model generates the formulation, while the content comes from verified sources.